Istio's Ambient mode pushed mTLS and identity down the stack and out of developer pods. For most platform teams I work with, the mesh has become invisible — like DNS or container networking and it just works.

The best part about boring infrastructure is that it enables things that weren't possible before. And the most important use case to enable right now is AI.

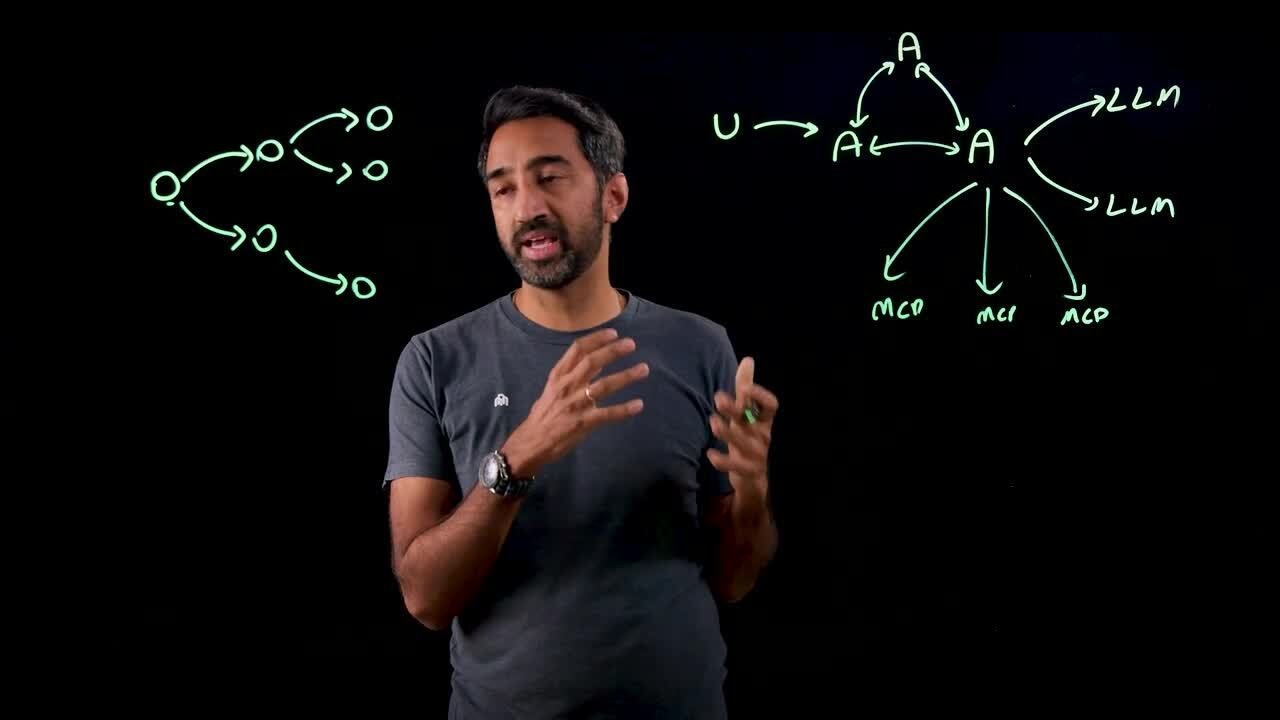

AI Agents Are Not Microservices

Every enterprise I talk to is seeing an exponential rise in new communication patterns:

- clients/agents calling LLMs

- clients/agents calling MCP servers

- agents calling other agents

It's the same distributed multiple clients talking to multiple servers pattern we saw with microservices, with one critical difference: predictability. Microservices were deterministic. AI doesn't follow the same path every time. Two identical prompts can result in completely different flows. An agent might call tools you didn't anticipate, reach services you didn't plan for, or compose multi-step workflows on the fly.

When behavior is unpredictable, the security shortcuts we got away with in the microservices era start to break down. Things like using broad identity JWT tokens, skipping mTLS, perimeter-based trust, etc. worked because we could predict the execution path the code and requests take and we knew the blast radius. With AI, trust boundaries have to be more granular.

The Mesh as Foundation

At the core of it, Istio Ambient provides workload identity at L4. Every connection between pods carries a SPIFFE certificate. When a service needs additional L7 routing or policy enforcement, ztunnel routes traffic through a waypoint proxy, with the caller's identity already cryptographically proven.

The absolute best part of Ambient is that the waypoint is pluggable.

It doesn't have to be the default Envoy waypoint that comes with Istio. For AI traffic, we can use agentgateway, an AI-native proxy that understands LLM request/response formats and speaks MCP natively. Running it as a waypoint means that it runs at the same HBONE enforcement hop, inheriting the same SPIFFE identity chain. For non-AI traffic, you can continue using the standard Envoy waypoint.

The mesh handles identity and encryption. Agentgateway handles AI-aware security, routing, and observability for AI traffic. Envoy handles the rest.

What the Waypoint Enables

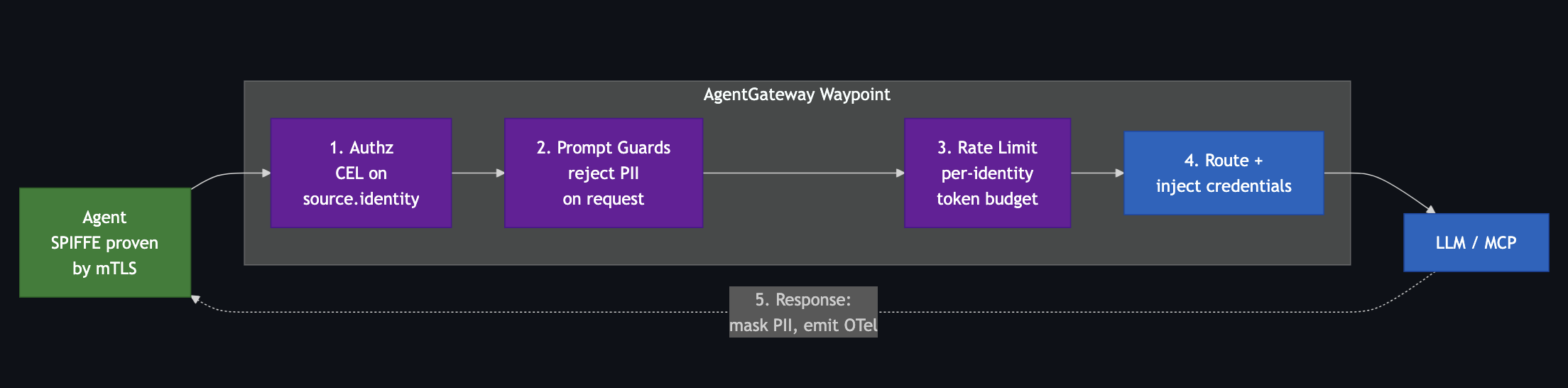

Because the mesh already proved who the caller is, the waypoint gets to focus entirely on AI-first concerns. Tokens, tools, providers, prompts.

Authorization on mesh identity

You write CEL expressions that reference the caller's SPIFFE identity directly from the mTLS connection. No JWT validation step, no API key lookup, no token introspection.

# EnterpriseAgentgatewayPolicy

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: openai

traffic:

authorization:

action: Allow

policy:

matchExpressions:

- 'source.identity.serviceAccount == "customer-agent"'

- 'source.identity.serviceAccount == "support-agent"'A rogue agent (even one on the same node, in the same namespace) gets rejected at the waypoint and the connection doesn't make it past that.

LLM routing with credential injection

The agent calls http://myllm.com/v1/chat/completions with plain HTTP and no API key. The waypoint routes to the desired LLM backend, injects the API key from a Kubernetes Secret, and originates TLS to the provider. The agent never sees a credential.

# AgentgatewayBackend

ai:

provider:

anthropic:

model: "claude-sonnet-4-20250514"

policies:

auth:

secretRef:

name: anthropic-secret # API key here, never in app

---

# HTTPRoute

parentRefs:

- name: agentgateway-waypoint

rules:

- matches:

- path:

type: PathPrefix

value: /anthropic

backendRefs:

- name: anthropic

group: agentgateway.dev

kind: AgentgatewayBackend

Switching LLM providers is a simple config update, which is rolled out instantly.

API keys are the most common pattern, but the same model handles AWS sigv4 for Bedrock, GCP IAM for Vertex, Azure keys, and OAuth token exchange for SaaS upstreams. The credential never lives in the agent.

Tool-level access control for MCP

The waypoint parses MCP natively and evaluates CEL expressions against the specific tool being called. Tools that don't match are hidden from tools/list and rejected on tools/call. The agent won't see tools it's not authorized to use.

When the waypoint federates multiple MCP servers behind a single endpoint, tool names collide (every server has a search, every server has an echo). Use mcp.tool.target to scope by the upstream server, alongside mcp.tool.name:

# EnterpriseAgentgatewayPolicy

targetRefs:

- group: agentgateway.dev

kind: AgentgatewayBackend

name: federated-mcp

backend:

mcp:

authorization:

action: Allow

policy:

matchExpressions:

# support-agent: read-only tools on the github server

- 'source.identity.serviceAccount == "support-agent" &&

mcp.tool.target == "github" &&

mcp.tool.name in ["get_me", "list_issues"]'

# release-bot: write tools, but only on the github server

- 'source.identity.serviceAccount == "release-bot" &&

mcp.tool.target == "github" &&

mcp.tool.name in ["create_release", "list_commits"]'

# data-agent: read-only on the catalog server

- 'source.identity.serviceAccount == "data-agent" &&

mcp.tool.target == "catalog" &&

mcp.tool.name in ["search", "describe"]'

When you're dealing with probabilistic execution, having the policy layer be deterministic matters a lot.

Token-based rate limiting per identity

Rate limit buckets keyed on the caller's SPIFFE service account, counting actual LLM tokens consumed rather than just request count. Batch-processing agents and customer-facing agents get different budgets, enforced at the infrastructure layer.

# RateLimitConfig

raw:

descriptors:

- key: agent

rateLimit:

requestsPerUnit: 100000 # tokens

unit: HOUR

rateLimits:

- actions:

- cel:

expression: 'source.identity.serviceAccount'

key: agent

type: TOKEN # count LLM tokens, not requestsPrompt guards

PII patterns and built-in detectors catch sensitive content before it leaves the cluster. The guardrails live outside the model, where prompt injection can't compromise them.

# EnterpriseAgentgatewayPolicy

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: openai

backend:

ai:

promptGuard:

request:

- response:

message: "Blocked by content safety policy"

regex:

action: Reject

builtins: [CreditCard, Ssn, Email]

response:

- regex:

builtins: [CreditCard, Ssn]

action: MaskUnified observability

Istio gives you request rates, latencies, and connection metrics for every service. The agentgateway waypoint layers AI-native telemetry on top, following OpenTelemetry GenAI semantic conventions: gen_ai.provider.name, gen_ai.request.model, gen_ai.usage.input_tokens, gen_ai.usage.output_tokens. For MCP: mcp.method, mcp.session.id, mcp.tool.name. Every log entry carries the caller's SPIFFE identity.

You can answer "which agent consumed the most tokens last week" or "which team's agents are calling which tools" without instrumenting a single line of application code.

None of this requires application changes. The agent pod has no idea the waypoint is there.

All of these policies stack on the same waypoint and evaluate in order on each request:

Why Identity Is the Unlock

Most people building agentic platforms are underestimating the identity problem. Every AI gateway I've looked at struggles with this. Who is calling this LLM? Which agent is making this MCP tool call? The typical answer involves JWT tokens, API keys, and custom headers that application teams have to manage, rotate, and debug.

A security architect at one of the financial services orgs I work with put it bluntly: "once you have an id claim, you can pretty much call anything on the network." Three separate employee IdPs, stateful tokens that require introspection on every call, no clean mechanism to scope down what individual agents can access.

Agents make this worse. In microservices, identity usually meant the workload, with end-user context layered in via a JWT when needed. Agents introduce three: the human user, the agent acting on their behalf, and the delegation relationship between them. If all of that collapses into a single service account or a broad id claim, security teams can't answer the basic question: who is actually doing this, and should they be allowed to?

The SPIFFE cert is the identity. The waypoint uses it for authorization decisions, rate limit bucket keys, and structured audit logs. One identity system, zero application-layer token management.

Multi-Cluster

Production agentic deployments won't live in a single cluster. They'll span regions, compliance boundaries, and team boundaries. An agent in one cluster will need to call an MCP tool server in another.

Istio Ambient already provides multi-cluster service discovery and routing. Clusters share a root of trust with per-cluster intermediate CAs, so SPIFFE identities are verifiable across cluster boundaries.

The Agentic Mesh

The pattern I keep seeing in customer conversations is that everyone is reinventing agent security from scratch, with JWT plumbing, header conventions, and per-app rate limit middleware. Teams that already run ambient mesh tend to figure out pretty quickly that most of that isn't theirs to build, because the identity layer is already there and an AI-native waypoint gives them the rest of the L7 controls on the same enforcement hop.

There isn't really a separate trust model to design here or a parallel security stack to operate. The waypoint slot in ambient was always pluggable, and AI is just the first compelling reason to plug something other than Envoy into it.

%20a%20Bad%20Idea.png)

%20For%20More%20Dependable%20Humans.png)