What Is Linkerd service mesh?

Linkerd is a service mesh platform based on a network micro-proxy from Buoyant. It was designed for containerized environments and supports platforms like Kubernetes and Docker. The platform is open source.

Linkerd can alleviate the problems associated with operating and managing microservices applications. Interactions between services are a key component of application runtime behavior. Linkerd provides a layer of abstraction to help control these communications, giving developers better visibility, improving network efficiency and enabling more robust security.

Linkerd architecture explained

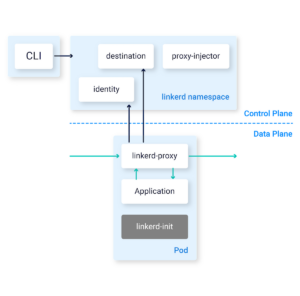

The Linkerd architecture has two main elements:

- The control plane—a set of services that control Linkerd as a whole.

- The data plane—consists of transparent micro-proxies running together with each service instance within the same Kubernetes pod—this is known as a “sidecar container”. These proxies automatically handle all TCP traffic to and from the service and communicate with the control plane for configuration.

Linkerd also provides a CLI that can interact with the control and data planes.

Image Source: Linkerd

The Linkerd Data Plane

The proxy components that make up Linkerd’s data plane are usually injected via the command line, making it easy to add the proxy to new services. When a service has a proxy component, it becomes part of the service mesh, and can leverage the following features:

- Management of HTTP, HTTP/2, TCP, and WebSocket traffic

- Collection of telemetry data for HTTP and TCP traffic

- Load balancing at Layer 7 (application layer) for HTTP traffic

- Load balancing at Layer 4 (transport layer) for non-HTTP traffic

- TLS encryption applied automatically to all communications

- Failure diagnosis with a “tap” that enables investigation of network traffic

The Linkerd Control Plane

Linkerd’s control plane has two primary interfaces: an API and a web console. It centrally defines proxy configuration and collects metrics from the data plane. The control plane has the following primary components:

- Controller—a collections of containers that implement the public controller APIs, proxy APIs, and other internal functions.

- Web—implements the end-user UI.

- Metrics—built as a modified version of Prometheus, typically combined with a Grafana instance to provide a visual dashboard.

Linkerd’s control plane container comes with a pre-installed Linkerd proxy instance, so the control plane can also join the service mesh and can be controlled like any other service.

Learn more in our detailed guide to Linkerd architecture (coming soon)

Linkerd design principles

Linkerd aims to make operations and maintenance simple for service mesh operators. It follows three design principles:

- Keep it simple

No complex instructions or workflows to learn. Components should be well-defined and their behavior can be understood and predicted with minimal effort. This doesn’t mean Linkerd manages all operations through one-click wizards. It means that all aspects of the platform’s behavior must be unambiguous, clear, well-defined, and understandable.

- Minimize resource requirements

Linkerd should have the lowest possible performance impact on its users, especially for data plane instances. The data plane proxy should consume as little memory and CPU as possible. A single linkerd-proxy instance can handles thousands of requests per second with less than 10MB of memory and 25% of an average CPU core.

- Just works

Linkerd does not usually break existing applications or require complex configuration for day to day tasks. Customizing Linkerd’s behavior does require configuration, but there should not be extensive configuration to achieve the default behavior. For instance, Linkerd provides automated Level 7 protocol detection and rerouting of TCP traffic within pods, to reduce the need for configuration.

Linkerd deployment models

Linkerd operates as a standalone agent that is independent of languages and libraries and is suited to both microservices and containers. There are two main deployment models:

- Host-specific deployments—allow you to connect Linkerd instances to physical or virtual hosts. These will route the host traffic for all application service instances via the Linkerd instance.

- Sidecar proxy deployments—allow you to install one Linkerd instance per application service instance. These are useful for container-based applications, including any microservices applications that use Kubernetes pods or Docker containers.

Linkerd communicates with application services through one of these configurations:

- service-to-linker—each service instance routes the traffic through its Linkerd instance and handles traffic rules.

- linker-to-service—the Linkerd sidecar routes traffic to a service instance (traffic is not received directly by the instance).

- linker-to-linker—a hybrid configuration in which incoming traffic is handled by a Linkerd instance that routes traffic to that service instance. The service instance then routes outgoing traffic through the Linkerd instance.

Linkerd and Gloo Mesh

While Linkerd has been in the market for quite a while. Solo.io has decided to only support Istio in the Gloo Mesh product. Istio has a more robust community of contributors to the open source project, and has been proven in large, production Enterprise environments.